Challenge

Cognitive science is fundamentally concerned with questions about the nature of mental representation, and about how different representations interact to produce cognitive function (functional architecture). Each of these questions presents significant inferential challenges. In the case of functional architecture, it is extremely difficult to determine how different types of information (e.g. acoustic-phonetic representations and the lexicon) interact based on post-interaction behavioral responses, low-temporal resolution imaging, or patterns of precedence in neural activation. Similarly, because behavior always reflects a unique interaction between representation and process (the structure/process tradeoff), inferences about representation depend on assumptions about processing, and inferences about processing depend on assumptions about representation.

Response

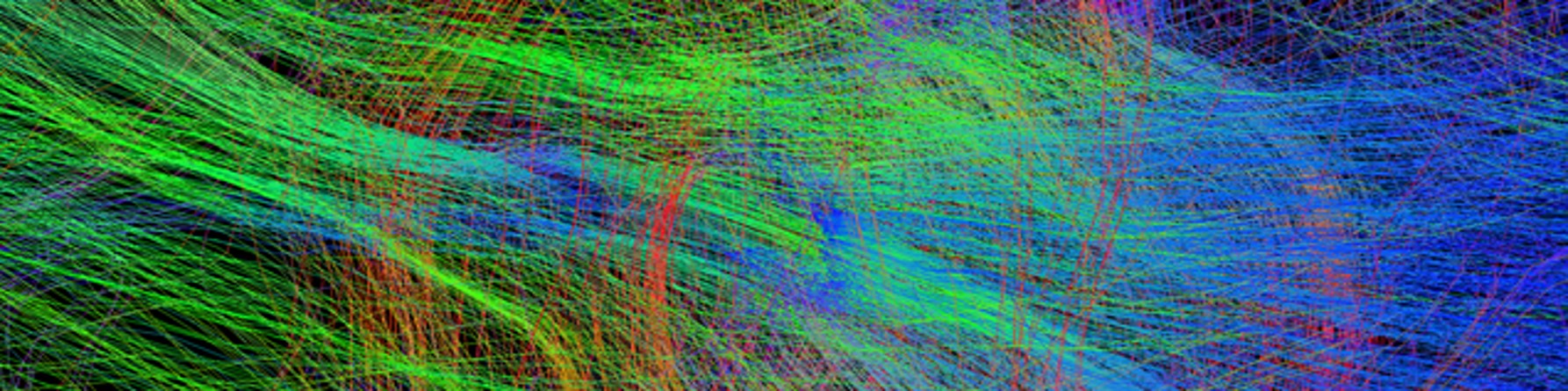

Our response to these challenges has been to adopt a research strategy that strengthens inferences about representation and functional architecture using cutting edge neural techniques. To understand functional architecture, we combine functional neuroanatomical models of speech processing [insert link to the dual lexicon model section below] with effective connectivity analyses [link to GPS section below] to literally read processing models from patterns of neural activation during task performance. To address the structure/process tradeoff and strengthen functional neuroanatomical models we are working to incorporate neural decoding techniques [link to decoding section] in our processing stream so that we can directly examine both representation and processing interactions in a single dataset